Abstract: China's artificial intelligence governance system embodies a unique "dual-track parallel" strategy. It originated from macro-level top-down planning, gradually evolved into "small incision", agile regulation targeting high-risk application scenarios, and is currently moving toward constructing a unified, comprehensive fundamental legal framework based on balancing risks.

Introduction

Artificial Intelligence (AI), as a strategic technology leading a new round of technological revolution and industrial transformation, has become a core force in reshaping the global economic structure and altering the landscape of international competition. Against this background, major countries in the world have successively elevated the development of artificial intelligence to the height of national strategy. China has clearly proposed that by 2030 it will become the world's major artificial intelligence innovation center; this ambitious goal provides the fundamental impetus for China's legislative and governance practices in the field of artificial intelligence. Analyzing China's artificial intelligence governance path reveals that it is not a one-time, static legislative action, but a dynamic, evolving, and continuously iterative strategic process.

This article aims to sort out the evolutionary context, core characteristics, and future trends of China's artificial intelligence legislation. The author understands that China's artificial intelligence governance system embodies a unique "dual-track parallel" strategy—while strongly promoting technological innovation and industrial development at the national level, it synchronously builds an increasingly comprehensive risk control and security assurance mechanism. This path starts from macro-level top-down strategic planning, gradually evolves into "small incision" style precise regulation and agile supervision targeting high-risk application scenarios, and is currently moving toward constructing a unified, comprehensive fundamental legal framework based on balancing risks.

To comprehensively display this complex picture, this article will be divided into three chapters. The first chapter will trace the historical evolution of China's artificial intelligence policies and legislation, outlining its clear context from strategic conception to specific regulation. The second chapter will focus on the judicial frontier, revealing the critical role played by judicial organs in filling legislative gaps and exploring rule boundaries through the analysis of a series of typical cases. The third chapter will be based on future legislative needs, attempting to conduct in-depth prospective analysis around six key topics.

Chapter 1: The Evolutionary Context and Core Characteristics of China’s Artificial Intelligence Legislation

China's artificial intelligence legislative process was not achieved overnight but followed a clear trajectory from macro to micro, from principles to rules, and from encouraging development to regulating development. This evolutionary process can be roughly divided into three interconnected and progressively advancing stages: top-level design and strategic initiation, focusing on specific risks and scenario regulation, and moving toward a comprehensive governance system.

1.1 Top-level Design and Strategic Initiation (2017-2021): Grand Strategic Foundation

The starting point of China's artificial intelligence governance can be traced back to July 2017 or even earlier. At that time, the State Council issued the New Generation Artificial Intelligence Development Plan (hereinafter referred to as the "Plan" or AIDP). This plan systematically proposed a "three-step" strategic goal facing 2030, aiming to seize the global commanding heights of artificial intelligence development. It not only clarified specific tasks in technology research and development, industrial upgrading, and talent cultivation but, more importantly, set the tone for all subsequent relevant policies and legislative activities—that is, driving the leapfrog development of artificial intelligence with national power. The core characteristics of policy documents in this stage lie in their macroscopic nature, forward-looking nature, and distinct incentive nature, focusing on mobilizing national resources and establishing technological ambition rather than imposing binding legal obligations.

Parallel to industrial ambitions is the preliminary exploration of ethical boundaries. Against the backdrop of increasingly apparent social ethical challenges that artificial intelligence technology may bring, China began to build the "soft law" foundation for ethical norms. In 2021, the Cybersecurity Standard Practice Guide—Artificial Intelligence Ethical Security Risk Prevention Guidelines issued by the National Information Security Standardization Technical Committee (TC260), and the New Generation Artificial Intelligence Ethics Norms issued by the National New Generation Artificial Intelligence Governance Professional Committee, are representative achievements of this period. These documents systematically proposed core ethical principles such as "people-oriented" (以人为本), "intelligence for good" (智能向善), "secure and controllable" (安全可控), and "fairness and justice" (公平公正) for the first time, and emphasized integrating ethical considerations into the entire lifecycle of artificial intelligence research and development, design, and application. Although they do not possess mandatory legal force, they established a value orientation for the entire industry and provided a theoretical basis and consensus on principles for the subsequent formulation of hard laws.

Overall, this initial stage reflected typical state-led industrial policy thinking. Its primary goal was to create the most favorable environment for the flourishing of emerging industries, deploying layouts at the level of "drawing the runway" and "setting up lighthouses" through strategic guidance and ethical advocacy, leaving ample space for subsequent, more refined legal regulation.

1.2 Focusing on Specific Risks and Scenario Regulation (2022-2025): "Small Incision" Agile Governance Mode

With the explosive development of artificial intelligence technology, especially generative artificial intelligence, its potential risks quickly surfaced. Problems such as false information, algorithmic discrimination, misuse of personal information, and intellectual property infringement posed direct threats to public interests and individual rights and interests. Facing these imminent challenges, China's governance strategy shifted from macro planning to more precise and pragmatic "small incision" (小切口) legislation.

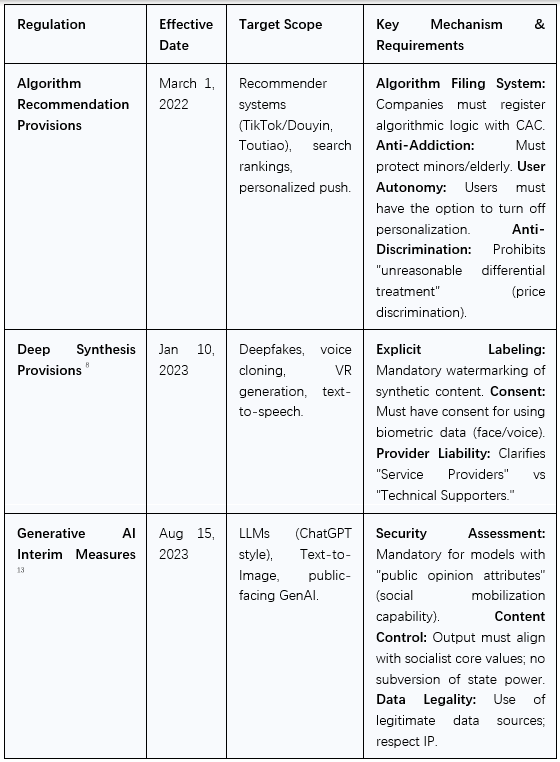

The landmark feature of this stage is that regulatory agencies quickly issued a series of departmental rules and normative documents targeting specific technologies and specific scenarios. The Internet Information Service Algorithmic Recommendation Management Provisions, implemented in 2022, aimed to solve problems such as "big data discriminatory pricing" (大数据杀熟) and information cocoons. The Provisions on the Administration of Deep Synthesis of Internet-based Information Services, implemented in 2023, directly pointed the sword at the risks brought by the abuse of "Deepfake" technology.

Among them, the most significant milestone is the Interim Measures for the Management of Generative Artificial Intelligence Services, issued jointly by the Cyberspace Administration of China (CAC) and seven other departments in July 2023. As the world's first national-level regulation specifically targeting generative AI, it centrally embodies China's core governance concept of "placing equal emphasis on development and security". On the one hand, these Measures encourage technological innovation; on the other hand, they draw clear legal red lines, such as requiring subjects "providing generative artificial intelligence services with public opinion attributes or social mobilization capabilities" to conduct security assessments. Service providers are responsible for the legality of training data sources, must strictly label generated content, and must establish sound user complaint mechanisms. It is worth noting that its "interim" nature precisely reflects the wisdom of Agile Governance: against the background of rapid technological iteration, first respond quickly to the most prominent risks through a temporary regulation, accumulate regulatory experience in practice, and lay the foundation for formulating more mature and stable laws in the future.

Accompanying these regulations is the intensive introduction of a series of national standards. The Basic Security Requirements for Generative Artificial Intelligence Service (TC260-003-2024) and the Cybersecurity Technology—Generative Artificial Intelligence Pre-training and Optimization Training Data Security Specification (GB/T 45652-2025) issued by the National Information Security Standardization Technical Committee (TC260) transform the principled requirements in laws and regulations into measurable and verifiable technical indicators. This "Law + Standard" dual-drive mode constitutes a major feature of China's AI governance, ensuring the implementability of regulatory requirements.

Table 1: The Triad of Chinese AI Regulations

1.3 Moving Toward a Comprehensive Governance System: From Technical Frameworks to a Unified Code

After accumulating rich practical experience through "small incision" legislation, China's artificial intelligence governance began to move toward a more systematic and systemic new stage. Its goal is to integrate the previously scattered rules and build a unified, coordinated, comprehensive legal framework.

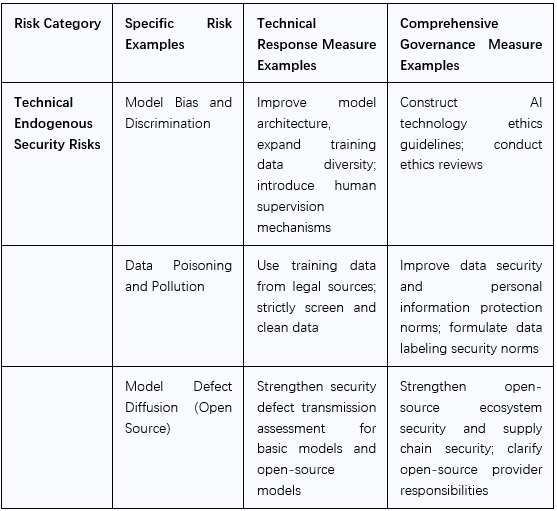

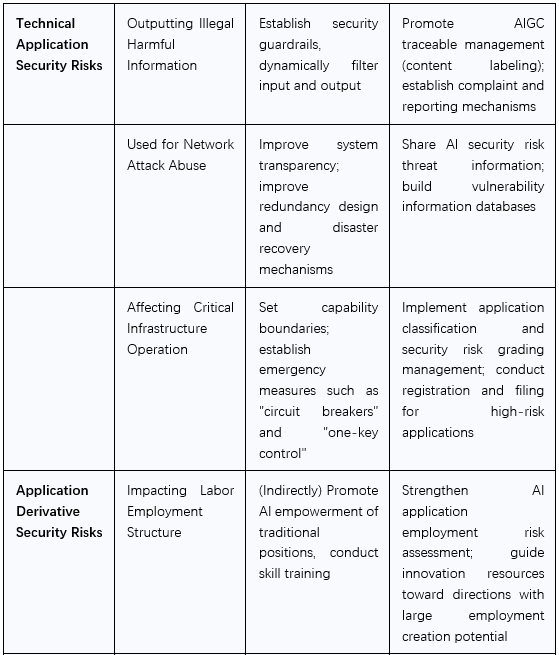

The warm-up work for this stage is the Artificial Intelligence Security Governance Framework (hereinafter referred to as "Framework 2.0"), released by the National Information Security Standardization Technical Committee (TC260) in September 2024 and quickly iterated to version 2.0 in September 2025. It systematically proposed a governance methodology based on risk management for the first time, classifying artificial intelligence security risks into three major categories: Technical Endogenous Security Risks (such as algorithmic bias, model defects), Technical Application Security Risks (such as cyber attacks, content security), and Application Derivative Security Risks (such as ethical impact, social impact). On this basis, Framework 2.0 advocates the implementation of risk classification management and agile governance, that is, conducting scientific assessments of risks based on dimensions such as application scenarios, intelligence levels, and impact scope, and adopting adaptive and differentiated governance measures. This complete theoretical system helps provide effective legislative logical support for the future unified Artificial Intelligence Law.

The legislative process of the high-profile Artificial Intelligence Law draft also reflects this evolution from prudence to maturity. The draft was listed as a preparatory deliberation item in the State Council's 2024 Legislative Work Plan, but in the NPC Standing Committee's 2025 Legislative Work Plan, the phrasing was adjusted to "relevant parties shall grasp the conduct of research and drafting work and arrange for deliberation as appropriate." This adjustment is not a stagnation of legislation, but precisely a strategic prudence. It indicates that the legislative body is fully absorbing the experience of previous interim regulations, governance frameworks, and judicial practices, striving to ensure that the law eventually introduced can withstand the tests of technological development and practical application.

The author believes that this unique evolutionary path reveals a deep logic of China's AI governance: an iterative loop of "Regulation - Learning - Integration." First, facing emerging risks, react quickly and conduct pressure tests through "small incision" interim regulations; this is equivalent to establishing a regulatory "test field" in the real world. Second, learn from the practice of these "test fields," identifying real regulatory difficulties, legal loopholes, and industry pain points. Finally, systematically integrate, refine, and sublimate these practically tested experiences and lessons into a theoretical system, and ultimately solidify them in the form of codification in the unified Artificial Intelligence Law. Therefore, the future Artificial Intelligence Law will not be a castle in the air, but a highly pragmatic and forward-looking law deeply rooted in China's local practice.

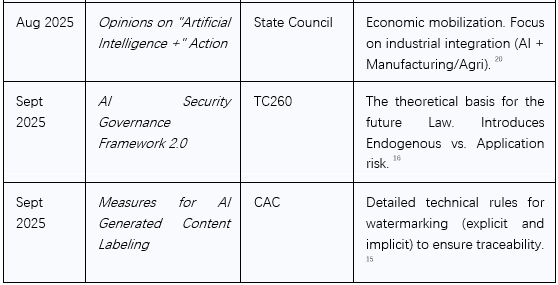

To clearly display this evolutionary process, the following table sorts out the key legislative and policy milestones in the field of China's artificial intelligence.

Table 2: Key Legislative and Policy Milestones in China’s Artificial Intelligence

Chapter 2: Judicial Practice in Rule Exploration: Insights from Typical Cases

Against the background that a formal, unified Artificial Intelligence Law has not yet been introduced, China's judicial system, especially the professional courts represented by the three Internet Courts, is playing the role of "de facto rule shaper." By hearing a series of frontier and difficult cases, courts not only settle disputes in specific cases but, more importantly, proactively interpret existing laws, extending their scope of application to new scenarios brought by artificial intelligence, thereby exploring and establishing a series of important adjudication rules in practice. These judicial precedents act as pathfinders, providing valuable practical experience and theoretical material for future legislation, forming a dynamic feedback loop between the judiciary and legislation.

2.1 Rights Attribution of AI-Generated Content: Flexible Exploration of "Process-Based Intellectual Outcome"

The copyright attribution of Artificial Intelligence Generated Content (AIGC) is a suspended legal problem globally. The "First AI Text-to-Image Case" (Li v. Liu) heard by the Beijing Internet Court provided a "Chinese Answer" to this. In this case, the court clarified for the first time that content generated using artificial intelligence, if it can reflect the "original intellectual investment" of the human user, should be recognized as a work and protected by copyright law.

Of course, the "intellectual investment" standard also has certain limits. The Shanghai "Prompt Word" case and the Suzhou "Butterfly Chair Case" laterally proved the limitation and symmetry of this standard.

In the Suzhou "Butterfly Chair Case" (the nation's first case denying the copyrightability of AI text-to-image), the court denied the originality of the AI-generated picture. The reason was that the prompt words input by the plaintiff "belonged to a relatively simple superposition," and "descriptions of picture elements and layout composition lacked distinctiveness," and the defendant produced evidence proving that works with similar concepts had appeared before the plaintiff.

Similarly, in the Shanghai "Prompt Word" case, the court also determined that the prompt word "lacked the personalized characteristics of an author" and "belonged to conventional expression in the field."

Analyzing the judgments of the above three courts together, it can be clearly seen that China's judicial adjudication has established a relatively loose "ruler" for the "intellectual outcome" standard:

- When the human "intellectual manifestation" is unique, personalized, and involves high investment, their work (or contribution) can cross the threshold of "idea" and constitute a protected "expression."

- When the human "intellectual manifestation" is conventional, simple, and lacks distinctiveness, their contribution stays at the "idea" level and is not protected.

The above judgments, especially the Beijing precedent, have aroused discussion in various circles, with the biggest controversy lying in whether the court's determination has broken through the protection framework set by the existing copyright law.

2.2 Extended Protection of Personality Rights: The Legal Boundary of AI Synthesized Voice and Virtual Images

The development of generative AI has made the simulation of personal voices, portraits, and even overall personality images reach an unprecedented degree of realism, thereby triggering new types of personality right infringement risks. In response, judicial practice has shown agile adaptability and interpretative power, extending the boundaries of traditional personality right protection to these virtual fields.

In the "AI Voice Infringement Case" (Yin v. An Intelligent Technology Company) and the "AI Celebrity Voice Livestreaming Case" (Li v. A Cultural Media Company), the court established a core determination standard—"Identifiability" (可识别性). The court held that whether it is a voice synthesized by AI technology or AI processing of sound recordings, as long as its final effect allows the general public or listeners in relevant fields to establish a clear correspondence with a specific natural person based on characteristics such as timbre, intonation, and pronunciation style, then this AI-generated voice falls within the protection scope of that natural person's "voice interests." Without the person's permission, using this highly identifiable AI voice for commercial purposes (such as text-to-speech products, livestreaming sales) constitutes an infringement of their personality rights.

Going a step further, in the "AI Companion" case (He v. An Artificial Intelligence Technology Co., Ltd.), the court extended the protection scope from a single voice or portrait to a more comprehensive "virtual image." In this case, the defendant's software allowed users to upload the name and portrait of the public figure He, and "train" the AI together with other users, injecting specific personality, language styles, and interaction modes into it, thereby creating a highly anthropomorphic AI virtual character. The court determined that this behavior had transcended the use of a single portrait or name and was a comprehensive utilization of He's personality characteristics, forming a virtual personality highly associated with the person himself. Creating and using this virtual image without permission not only infringed on his name rights and portrait rights but also, because it might distort his personal image and reputation, infringed on the general personality rights constituted by personal dignity and personal freedom.

These judgments clearly indicate that China's judicial circle is constructing a multi-level personality right protection system to ensure that the personality rights and interests of natural persons are not weakened due to the virtualization and datafication of AI technology. Its core legal principle lies in: if the output of AI can objectively point to a specific, identifiable natural person, then the personality rights of that natural person should be protected by law.

2.3 Redefining Platform Responsibility: From "Safe Harbor" to Substantive Participant of Algorithms

In the era of artificial intelligence, online platforms are no longer merely passive display channels for user-generated content; their complex algorithm designs and operation rules often deeply intervene in or even dominate the generation and distribution of content. Judicial practice has keenly captured this change and began to re-examine and define the legal responsibilities of platforms, gradually breaking the application boundary of the traditional "Notice-Delete" Safe Harbor principle.

In the aforementioned "AI Companion" case, the platform argued that it only provided technical services, and the infringing content was uploaded by users, so it should be exempted from liability under the Safe Harbor principle. However, the court rejected this claim. The court deeply analyzed the platform's product design and algorithm mechanism and found that the platform was not a neutral technology provider. Instead, through setting rules and designing "training" algorithms, it actively organized, encouraged, and guided users to participate in the process of creating infringing virtual images and directly benefited from this core function. Therefore, the court determined that the platform played the role of a "substantive participant" and "co-creator" in the generation of infringing content and should bear direct tort liability as a content service provider.

In another "Platform Misjudgment of AIGC Case" (Tang v. A Technology Company), the platform was judged to bear breach of contract liability because its AI detection algorithm wrongly marked user-original content as AI-generated and imposed penalties. The judgment logic of this case is particularly critical: the court believed that the platform, as the controller of the algorithm and the maker of the decision, bears a "moderate duty of explanation and verification" for the results of its automated decision-making. When a user raises an objection to the algorithm's determination, the platform cannot simply shirk responsibility on the grounds of "algorithm results" but should provide a reasonable explanation regarding its judgment basis and decision logic. Since the platform failed to do this, its penalty behavior lacked a factual basis and constituted a breach of contract.

These two cases collectively reveal an important judicial trend: courts are penetrating the appearance of "technological neutrality" of platforms to conduct a substantive review of the actual role of their algorithms in the content ecosystem. If the platform's algorithm itself deeply participates in, organizes, or guides the occurrence of infringing acts, its legal liability will upgrade from indirect liability to direct liability. Meanwhile, the transparency and explainability of the platform regarding its algorithmic decisions are also becoming an important legal obligation. This judicial orientation undoubtedly poses higher compliance requirements for platform enterprises, urging them to embed legal responsibilities and ethical considerations into the core of algorithm design while pursuing technological efficiency.

2.4 Expansion of Fair Use: Defining Legal Responsibility with Classified Policies and an Inclusive and Prudent Attitude

The Hangzhou Internet Court, in the "Shanghai Xinchuanghua Cultural Development Co., Ltd. v. Hangzhou Jellyfish Intelligent Technology Co., Ltd. Copyright Infringement and Unfair Competition Case" (hereinafter referred to as the "Ultraman Case"), proposed adopting a classified and layered strategy for the infringement determination of generative artificial intelligence services:

- Data Input and Data Training Stage: The main purpose of this stage is to learn and analyze the thoughts, emotions, language features, and unique styles expressed in prior works, and extract rules and patterns from them to facilitate subsequent transformative creation of new works; it is appropriate to adopt a relatively loose and inclusive determination standard.

- Content Output and Content Use Stage: This stage faces the public directly and involves the dissemination and diffusion of infringing content; it is appropriate to adopt a relatively strict determination standard.

Regarding the use of works in the AI training stage, the court determined: The creation and development of generative artificial intelligence require the introduction of massive amounts of training data at the input end, which inevitably uses others' works. In the data training stage, if the purpose of using others' works is not to reproduce the original creative expression of the works, and it does not affect the normal use of the rights works or unreasonably damage the legitimate interests of the relevant copyright owners, it can be considered fair use. Therefore, this case also declared the "expansion" of the copyright fair use system in China's judicial practice. Slightly regrettably, since this case involved user-uploaded data, key issues such as whether the "toxicity" of training data leads to the inapplicability of the fair use principle remain to be discussed subsequently.

In summary, these judicial precedents collectively constitute a dynamically evolving "AI Common Law." They provide valuable adjudication guidelines in specific scenarios for the attribution of rights in AIGC, the protection boundaries of personality rights, the liability division for training data copyright protection, and the attribution of platform liability. This judicial activism not only effectively fills the gaps in current laws but, more importantly, "pressure tests" frontier legal issues through individual case adjudication. The legal concepts established are highly likely to be absorbed and adopted by the future Artificial Intelligence Law, thereby completing the transformation from judicial practice to statutory legislation.

Chapter 3: Future AI Legislation: In-depth Analysis of Key Topics

However, as the systemic impact of artificial intelligence technology becomes increasingly apparent, the agile but dispersed governance model described above has also begun to expose its inherent limitations. Currently, promoting a unified, comprehensive Artificial Intelligence Law may be the long-term solution. Only by formulating a comprehensive artificial intelligence legal system at the high hierarchical level of "Law" can we effectively coordinate the interests of all parties, establish a unified artificial intelligence governance system at the national level, and exert the fundamental, stable, and long-term role of the rule of law in the era of artificial intelligence.

Based on an in-depth understanding of the evolutionary context of China's AI governance and judicial practice, this chapter will focus on six core topics, systematically analyzing the institutional designs and core considerations that may be presented in the future unified Artificial Intelligence Law and related supporting regulations. These six topics—supporting R&D, building infrastructure, improving ethics, monitoring risks, innovating regulation, and promoting healthy development—can be viewed as several entry points for China's governance concept of "equal emphasis on development and security."

3.1 Supporting Basic Theoretical Research of AI and R&D of Key Technologies

Policy Goal: China's national strategy has always placed achieving high-level self-reliance and self-strengthening in science and technology at the core position. The New Generation Artificial Intelligence Development Plan and the latest Opinions on Deepening the Implementation of the "Artificial Intelligence +" Action both repeatedly emphasize strengthening basic theoretical research on artificial intelligence, accelerating the promotion of major scientific discoveries from "0 to 1," and supporting independent innovation and breakthroughs in core technologies such as basic models and key algorithms. One of the primary tasks of legislation is to provide institutional guarantees and incentives for realizing this grand goal.

Probability Analysis: The future legislative framework will likely adopt a two-pronged strategy.

On the one hand, building a secure and controllable open-source governance system. Framework 2.0 clearly proposes to "synchronously improve the security capability of the open-source ecosystem while cultivating and developing the open-source innovation ecosystem." This foreshadows that future laws will regulate open-source activities; specific measures may include:

- Clarifying the responsibilities of open-source providers: Require providers of open-source basic models to fulfill necessary risk notification obligations, such as attaching detailed technical documentation when releasing, explaining the model's known defects, potential biases, security loopholes, and scope of application.

- Defining "prohibited" usage behaviors: The law may authorize or encourage open-source communities and providers to clearly prohibit using models for illegal purposes (such as creating false information, conducting cyber attacks, or developing prohibited weapons) through open-source agreements.

- Granting certain liability exemptions: Liability exemption designs for open-source models will become a realistic consideration. But this "exemption" is not absolute; it has clear boundaries. If open-source model developers want to enjoy protection similar to a "Safe Harbor," they at least need to fulfill basic transparency obligations and risk prevention obligations, such as providing instruction documents and taking basic technical measures to limit the generation of illegal information.

On the other hand, strengthening and refining intellectual property protection rules. As mentioned above, internet courts in various places have already had judicial practice examples. The author suggests that the future Artificial Intelligence Law or supporting intellectual property regulations can elevate this judicial principle into a clear legal rule. This may include:

- Protecting intellectual property in training data: Clarify the legal boundaries of using copyright-protected data or data rights in the model training stage and explore establishing reasonable use licensing or compensation mechanisms to respond to the concerns of data rights holders.

- Step-by-step clarification of the rights attribution principles for human-machine works: With "Substantive Contribution" (substantive intellectual investment) as the core standard, establish the attribution and allocation rules for AIGC rights in complex scenarios involving multiple participants such as developers, users, and data providers.

Through this encouraging and open legislative design, it helps to create a benign development environment that can not only incentivize individuals or enterprises to carry out disruptive innovation but also ensure the overall health, security, and openness of the open-source ecosystem.

3.2 Promoting Artificial Intelligence Infrastructure Construction

To achieve comprehensive governance goals, unified, secure, and efficient legal infrastructure must be provided for the two cornerstones of artificial intelligence—"Data" and "Computing Power." The Opinions on the "Artificial Intelligence +" Action have deployed "strengthening intelligent computing power coordination" and "strengthening data supply innovation" as core basic support capabilities. Promoting infrastructure construction is not only a technical and investment issue but also requires a solid legal framework to solve a series of complex issues such as data property rights, data circulation, computing power scheduling, and network security.

At the computing power level, legislation can provide policy and legal bases for the construction of a "national integrated computing power network." This may include:

- Formulating safety standards for computing power infrastructure: The law will clarify the security protection levels and operational requirements for critical information infrastructure such as national intelligent computing centers and supercomputing clusters, ensuring the stability and reliability of computing power resources and preventing cyber attacks and malicious consumption.

- Regulating the scheduling and sharing of computing power resources: Establish interconnection standards and scheduling rules for cross-regional and cross-subject computing power resources through legislation, promoting the effective implementation of national projects such as "East Data West Computing." At the same time, encourage the development of standardized and inclusive computing power services to lower the threshold for SMEs to use AI.

At the data level, legislation will be dedicated to building a secure and efficient data element market.

- Improving data property rights and circulation rules: Based on the existing Data Security Law and Personal Information Protection Law, further clarify the ownership definition, usage boundaries, and revenue distribution mechanisms of different types of data (personal information, industrial data, public data) in AI scenarios. Especially for the situation where model training uses a large amount of publicly available data on the network, legislation needs to define the compliance boundaries of automated data collection more clearly.

- Promoting the opening of high-quality public data: The law will promote the establishment of a system for the conditional and compliant opening of public data, encouraging governments and public institutions to open high-quality scientific and government data sets to society after desensitization processing to support basic research and model training; this is in line with the requirements in the Opinions on the "Artificial Intelligence +" Action.

- Cultivating the data service industry: Legislation will support and regulate the development of emerging data service formats such as data labeling, data cleaning, and data synthesis, providing high-quality "data fuel" for the artificial intelligence industry while ensuring the compliance and security of the entire data processing process.

By providing clear legal guarantees for the construction and operation of these two major infrastructures of computing power and data, China aims to pave a solid and secure track for the takeoff of the entire artificial intelligence industry.

3.3 Improving Artificial Intelligence Ethical Norms

The author predicts that future legislation will promote artificial intelligence ethics from "soft initiatives" to "hard constraints." From the early New Generation Artificial Intelligence Ethics Norms to the clear requirement of "improving artificial intelligence laws, regulations, and ethical guidelines" in the Opinions on the "Artificial Intelligence +" Action, it can be seen that the institutionalization and legalization of ethical governance have become a consensus at the national level.

The author understands that future legislation does not rule out realizing the "hard landing" of AI ethics through "institutional embedding" and "process coercion."

- Ethics Review System: The current Measures for Scientific and Technological Ethics Review (Trial) already require units engaged in specific AI scientific and technological activities to set up scientific and technological ethics (review) committees. The future Artificial Intelligence Law is highly likely to generalize and mandate this requirement, especially for AI development and application involving high-risk fields such as life and health, public safety, judicial law enforcement, and finance and insurance.

- Legalization of "Value Alignment": As repeatedly emphasized in Framework 2.0, the concept of "Values Alignment." Future legislation may require developers and providers of AI systems to take technical and management measures to ensure that the design, training data, and output results of their products and services comply with China's laws, regulations, social morality, and ethical requirements, effectively avoiding the risk of generating discriminatory content regarding ethnicity, faith, gender, etc. The performance of this obligation will also likely be included in the scope of algorithm filing and security assessment reviews.

- Protecting the rights and interests of vulnerable groups: Legislation may pay special attention to the impact of artificial intelligence on groups such as minors, the elderly, and people with disabilities, requiring related products to fully consider their usability, safety, and special needs in functional design and service modes, preventing the widening of the "intelligent divide," which has already been reflected in the Interim Measures for the Management of Generative Artificial Intelligence Services.

3.4 Strengthening Security Risk Monitoring and Assessment

Artificial intelligence risks are characterized by complexity, suddenness, and transmissibility. How to design a classified and graded assessment system that can comprehensively cover various risks, has operability, and can adapt to rapid technological changes is the key to future legislation.

Considering the framework nature of future legislation, the author believes that model classification supervision will not be overly complicated. Strong regulatory measures will mainly apply to AI systems with high systemic risks. This includes general large models with huge parameter scales, large numbers of users, and social mobilization capabilities, and may also include specialized models applied in critical infrastructure fields such as finance, medical care, and autonomous driving.

Table 3: Risk Classification Proposed in "Artificial Intelligence Security Governance Framework 2.0"

3.5 Innovative Regulation

Innovative regulation is not easy. Regulatory tools must possess technological sensitivity and foresight to effectively deal with new challenges such as model black boxes and rapid algorithm iteration. At the same time, supervision needs to shift from single government leadership to collaborative governance involving the government, industry, and society.

The author understands that future security supervision will likely unfold around the following institutional tools.

- Deepening and expanding the algorithm filing system: The filing system currently implemented for recommendation algorithms and generative AI will be established as a basic regulatory tool and deepened. It is not ruled out that future filing requirements will be more detailed, enabling regulatory agencies to grasp the foundation of high-risk algorithms in advance and achieve "ex-ante" supervision.

- Mandatory content labeling and traceability management: The Measures for the Labeling of Artificial Intelligence Generated or Synthesized Content, effective September 2025, elevated content labeling from an "industry initiative" to a "legal obligation." The law will mandatorily require that all AIGC (including text, images, audio, and video) must be attached with explicit or implicit labels to ensure the traceability of its source. This system is a key technical regulatory means to deal with false information, protect intellectual property rights, and maintain the health of the content ecosystem.

- Building a full-lifecycle security management chain: The law will likely clarify the security responsibilities of different subjects in the AI value chain. From model algorithm developers (need to ensure model endogenous security, conduct full testing), service providers (need to establish security management mechanisms, fulfill content audit and user protection obligations), to system users (need to abide by laws and agreements, not abuse technology), the law will build a complete closed loop of responsibility, ensuring that every link has a clear responsible person to achieve whole-process governance.

- Establishing a multi-party collaborative governance mechanism: Legislation will likely refer to the data governance model, encouraging and regulating industry associations to formulate practice guidelines higher than the legal bottom line requirements, supporting third-party professional institutions to conduct relevant evaluations and certifications, and establishing a risk hazard reporting acceptance mechanism facing the public. This will form a diversified co-governance pattern of government supervision, industry self-discipline, social supervision, and user participation, improving the resilience and effectiveness of the governance system.

3.6 Promoting Artificial Intelligence Healthy Development

The author believes that promoting the healthy development of artificial intelligence is the ultimate goal of future legislation and governance activities—that is, ensuring that while effectively preventing risks, supervision can maximally release the huge potential of artificial intelligence as the core engine of "New Quality Productive Forces," serving high-quality economic development and the improvement of social welfare, and ultimately realizing the new forms of intelligent economy and intelligent society depicted in the Opinions on the "Artificial Intelligence +" Action.

The challenge here is to avoid stifling innovation vitality due to excessive regulation or "one-size-fits-all" regulations, ensuring that the legal framework has sufficient flexibility and foresight to leave space for the development of new technologies, new models, and new formats.

Therefore, the author understands that the future Artificial Intelligence Law will not only be a "Management Law" but also a "Promotion Law," specifically manifested in the following points:

- Setting up "Regulatory Sandboxes" and pilot demonstration systems: To encourage innovation, the law will highly likely adopt the "inclusive and prudent" principle proposed in Framework 2.0, or set up "regulatory sandboxes" or secure and controllable pilot areas, allowing innovative enterprises to test their frontier technologies and business models within a limited scope and controllable risks, and giving certain space for fault tolerance and correction.

- Strengthening legal guarantees for talent cultivation and international cooperation: The law will relate to the national talent strategy and diplomatic strategy. On the one hand, provide legal support for strengthening the talent training system in fields such as AI security design, development, and governance. On the other hand, consider integrating principles of international cooperation claims such as the Global AI Governance Initiative into domestic law, such as promoting technology inclusiveness, supporting open-source sharing, and enhancing the voice of developing countries in global governance, thereby making domestic legislation a carrier for practicing China's global governance concepts.

- Guaranteeing application landing and industrial empowerment: Future legislation will provide guarantees for the in-depth implementation of the "Artificial Intelligence +" action. For example, by formulating large model/agent application security guidelines for important industry fields (such as energy, finance, transportation, medical care), providing a clear roadmap for the safe and effective landing of AI technology in these key fields, thereby safely releasing the potential of industry applications.

In summary, these six key topics will outline the core parts of China's future artificial intelligence legislation. The author understands that it will be a finely balanced, multi-objective driven legal system: it has both strict risk control bottom lines and flexible innovation incentive mechanisms; it emphasizes autonomy and controllability while upholding openness and cooperation; it is based on solving urgent domestic governance problems while harboring the ambition to shape leading governance rules.

Ultimately, what China is building is a composite governance system led by a fundamental law, supported by specialized and vertical regulations and normative documents, supplemented by active justice, and deeply integrating industrial policy, security supervision, and ethical guidance. The final effect of this system will not only deeply affect the development trajectory of China's digital economy and society in the coming decades but will also leave a colorful stroke in the future picture of global artificial intelligence governance.

Of course, the life of the law lies in execution—how to truly maintain that delicate balance between innovation and security in practice is where the challenge begins.

Conclusion

This article demonstrates that China's AI governance is neither a monolithic crackdown nor a libertarian free-for-all. It is a carefully engineered "Dual Drive" system. The state uses "Small Incision" regulations to surgically remove risks (deepfakes, addiction) while using massive industrial policy (AIDP, AI+ Action) to fuel growth. The judiciary acts as the stabilizer, creating pragmatic compromises (like the Ultraman ruling) that allow the industry to function within these constraints. Ultimately, China’s Artificial Intelligence Law will function more as a framework law (a skeletal structure) — it will not feature an excessive number of provisions, but will lay down fundamental principles. In the future, it will be supplemented and enriched by various administrative regulations, departmental rules, and judicial interpretations, which will flesh out this skeletal framework and enable it to evolve into a comprehensive and robust legal regime.